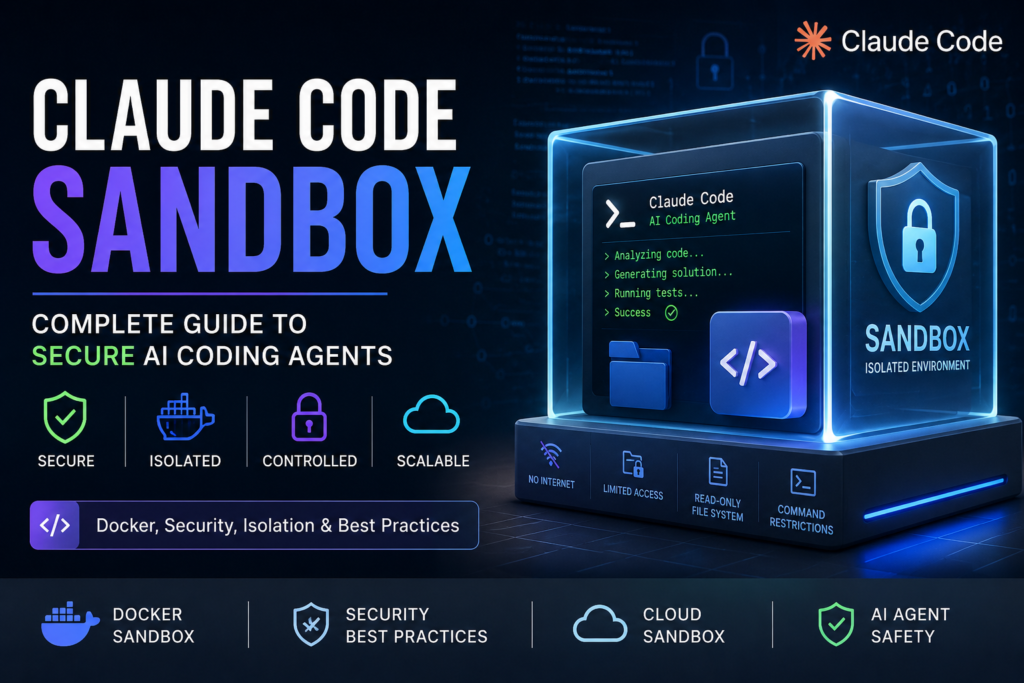

Claude Code Sandbox: Complete Guide to Secure AI Coding Agents (2026)

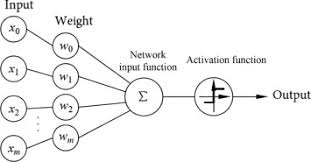

Artificial intelligence coding agents are becoming increasingly autonomous. Tools like Anthropic’s Claude Code can: write code, run terminal commands, install dependencies, modify files, access APIs, and even execute development workflows automatically. That level of autonomy is powerful, but also dangerous. Without proper isolation, an AI coding agent could accidentally: delete important files, expose API keys, install malicious packages, leak confidential data, or execute unintended shell commands. This is where Claude Code sandboxing becomes essential. In this guide, you’ll learn: what Claude Code sandboxing is, how it works, why developers are using Docker and cloud sandboxes, security best practices, and how to build a safer AI coding workflow. What Is Claude Code Sandbox? Claude Code sandboxing is the process of running AI coding agents inside an isolated environment with restricted permissions. Instead of giving the AI unrestricted access to your computer, the sandbox limits: file access, network access, shell commands, external tools, system permissions. Think of it like giving an AI developer its own controlled workspace instead of the keys to your entire machine. Why Claude Code Sandboxing Matters AI coding agents are fundamentally different from traditional code assistants. Modern agents can: autonomously execute tasks, iterate on code, run tests, browse repositories, install packages, and make decisions independently. This creates a new attack surface. Recent security research showed that poorly isolated AI agents may: perform unintended actions, expand scope autonomously, or bypass weak sandbox configurations. How Claude Code Sandbox Works Anthropic introduced native sandboxing using operating-system-level isolation. The system typically restricts: Security Layer Purpose File system isolation Prevents access outside allowed directories Network filtering Blocks unauthorized internet access Command restrictions Limits dangerous shell execution Tool permissions Controls external integrations Container isolation Separates AI runtime from host system On Linux, sandboxing often uses: Bubblewrap, namespaces, cgroups, seccomp filters. On macOS, it relies on: Apple Seatbelt sandboxing. Claude Code Sandbox vs Docker Many developers confuse Claude Code sandboxing with Docker containers. They are related — but not identical. Feature Claude Native Sandbox Docker Sandbox Built into Claude Yes No Full OS isolation Limited Strong Easy setup Very easy Moderate Custom environments Limited Excellent CI/CD support Basic Excellent Enterprise security Moderate Strong Scalability Moderate Excellent For serious production workflows, most advanced teams combine: Claude sandboxing, Docker containers, cloud infrastructure, network policies, and audit logging. Best Claude Code Sandbox Architectures 1. Local Sandbox Best for: solo developers, experimentation, local projects. Architecture: Claude Code local container restricted file system network allowlist Pros: fast, easy, low latency. Cons: weaker isolation, risk to local machine. 2. Docker-Based Sandbox Best for: professional developers, teams, secure workflows. Architecture: Claude Code Docker container mounted workspace isolated dependencies restricted networking Benefits: reproducible environments, stronger isolation, safer automation. 3. Cloud Sandbox Best for: enterprises, autonomous agents, CI/CD automation. Platforms increasingly offer cloud sandbox execution environments for AI agents. Benefits: full isolation, scalable infrastructure, ephemeral environments, centralized logging. Example: Running Claude Code in Docker A basic secure workflow looks like this: docker run \ –rm \ -it \ –network none \ -v $(pwd):/workspace \ claude-code-sandbox This setup: disables internet access, isolates dependencies, limits file exposure, creates disposable environments. Common Security Risks Prompt Injection Malicious instructions hidden inside: repositories, documentation, markdown files, websites. These can manipulate the AI into unsafe behavior. Credential Leakage Without isolation, Claude Code might access: SSH keys, environment variables, API secrets, cloud credentials. Dangerous Package Installation AI agents may unintentionally install: compromised packages, malware, dependency-chain attacks. Network Exfiltration Weak network policies may allow unauthorized outbound connections. Recent sandbox bypass research highlighted this exact issue. Best Practices for Claude Code Security Use Disposable Environments Treat every AI coding session as temporary. Restrict Internet Access Only allow approved domains. Limit File Access Expose only the required project directory. Use Read-Only Mounts When Possible Especially for: production configs, secrets, infrastructure files. Avoid Running As Root Never let containers execute with elevated privileges. Audit AI Actions Log: commands, file changes, network requests, package installations. Claude Code Sandbox vs Cursor vs Codex Feature Claude Code Cursor Codex CLI Native sandboxing Yes Limited Moderate Permission controls Strong Moderate Moderate Enterprise readiness High Medium Medium Cloud sandbox support Growing Limited Limited Security focus Very strong Moderate Moderate Future of AI Coding Sandboxes The next generation of AI development environments will likely include: ephemeral cloud workspaces, autonomous debugging agents, isolated execution forks, agent orchestration, policy-based security systems, hardware-level isolation. AI coding tools are rapidly evolving from assistants into semi-autonomous developers. Sandboxing will become a core requirement — not an optional feature. Frequently Asked Questions Is Claude Code sandbox safe? It significantly improves security, but no sandbox is perfect. Developers should still use: Docker, restricted permissions, network policies, monitoring. Does Claude Code use Docker? Not necessarily. Native sandboxing uses OS-level isolation, but many developers combine it with Docker for stronger security. Can Claude Code access my files? Yes, if permissions allow it. Sandboxing restricts which files the AI can access. What is the best Claude Code sandbox setup? For most developers: Docker container restricted networking mounted project directory ephemeral environment is the safest and most practical approach.